What would happen if a tanker was destroyed and spilled out there?

What would happen if a tanker was destroyed and spilled out there?

Imagine if you showed this to someone in ~2009.

So sick of seeing confidently incorrect people opining, using historical examples, when they have never before cracked open a history book and have no idea of the context.

This has always been the case.

The issue is Twitter boosts them over less engaging experts. The new problem is the medium. Twitter is not an fair forum, and these dumb takes trend deliberately.

Even not-fully-reproducible open-weights models are extremely important because they’re poison to OpenAI, and they know it. It makes what they’re trying to commodify and control effectively free and utilitarian.

But there are fully open models, too, with public training data.

It’s anticompetitiveness.

They want to squash open models, and anyone too small to comply with this.

I say this in every thread, but the real AI “battle” is open-weights ML vs OpenAI style tech bro AI. And OpenAI wants precisely no one to realize that.

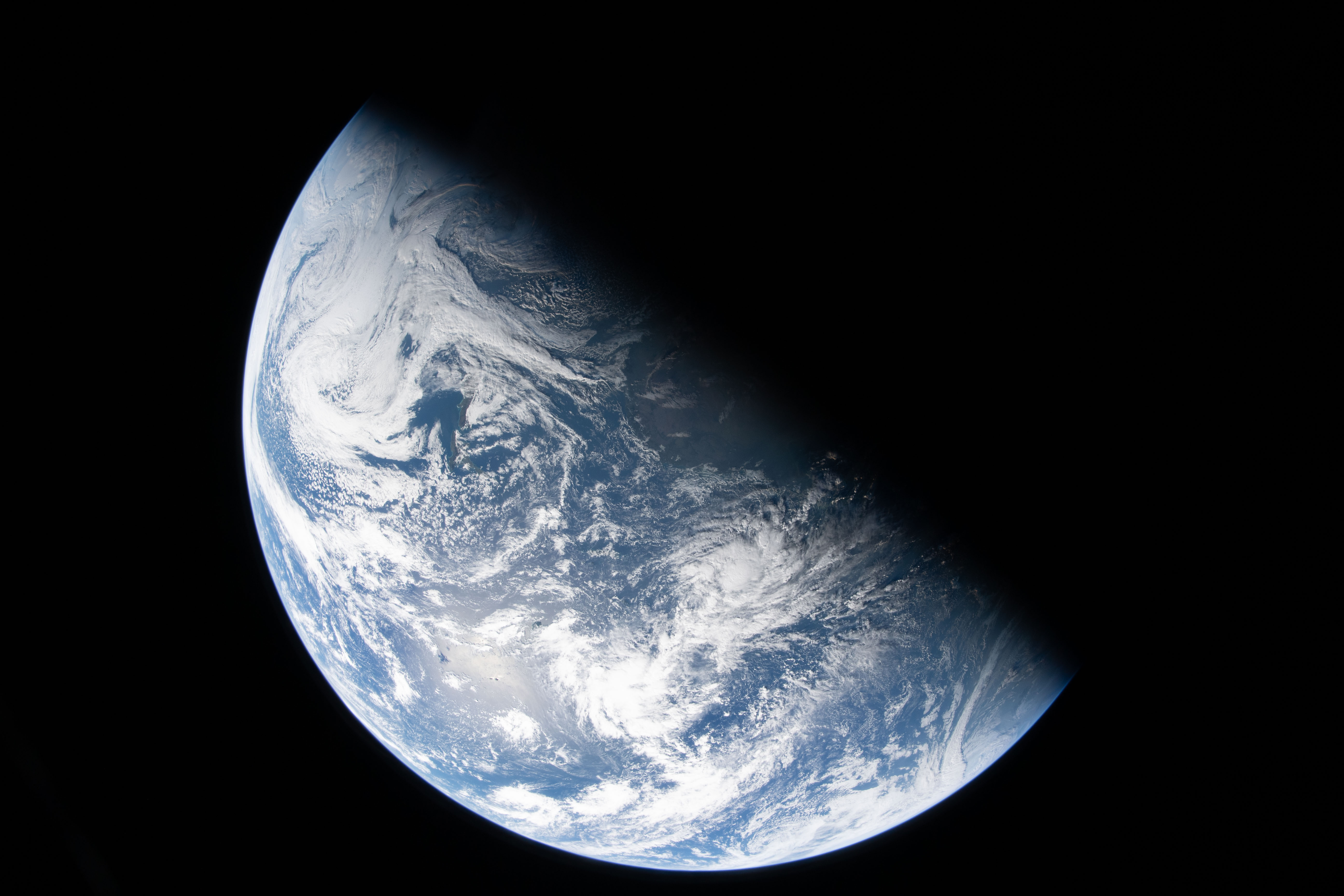

It is, indeed, the Sahara desert. Africa is upside down, but that little tip at the bottom of the photo is the Strait of Gibraltar. That greener lit up coast on the right is the Americas… Brazil, I think?

AFAIK anything past 32,000 is digitally expanded (which could be done with RAW post-processing).

EDIT:

See: https://www.photonstophotos.net/Charts/RN_ADU.htm#Nikon D5_14,Sony ILCE-1M2_14

The old Nikon D5, impressively, doesn’t seem to post-scale even at ISO 102400

Here’s the full res shot from the NASA website:

The photo’s metadata reveals it was taken with a Nikon D5, focal length: 22mm, aperture: f/4, and exposure time: 1/4 sec.

They should have brought a brighter lens, heh.

More:

On a seperate note, the top Twitter comments are making my brain rot:

circles aurora

any explanation to this

It’s a shame your mother didn’t swallow…

(seemingly a bot post?)

Good morning right back at you! 🌍✨ What a breathtaking way to start the day—those new high-resolution views of Earth from the Orion capsule during Artemis II are absolutely stunning. The crew (Reid Wiseman, Victor Glover, Christina Koch, and Jeremy Hansen) is well on their way after yesterday’s launch, capturing our planet as a glowing crescent against the void of space from tens of thousands of miles out. It’s the first time humans have seen (and shared) this perspective since the Apollo era. Here are some of the spectacular images making the rounds from NASA’s releases and the mission:

How the hell is the window edge BEHIND the Earth?

Why is the image so grainy for? Is this ai?

Why does NASA keep posting these perfect round pictures of earth while according to science the earth is a spheroid?

(posts a picture of a Google AI search hallucination)

https://pbs.twimg.com/media/HE_cAXKaMAAunQ_?format=jpg

I knew Twitter was bad now, but… Wow.

Not me.

I wanna be there to report every attribution-cropped post I can find, at least in subs where it’s applicable. And repost it without the crop.

Ughhh, I could go on forever, but to keep it short:

Tech bro enshittification: https://old.reddit.com/r/LocalLLaMA/comments/1p0u8hd/ollamas_enshitification_has_begun_opensource_is/

Hiding attribution to the actual open source project it’s based on: https://old.reddit.com/r/LocalLLaMA/comments/1jgh0kd/opinion_ollama_is_overhyped_and_its_unethical/

Constant bugs and broken models from “quick and dirty” model support updates, just for hype.

Breaking standard GGUFs.

Deliberately misnaming models (like the Deepseek Qwen distills and “Deepseek”) for hype.

Horrible defaults (like ancient default models, 4096 context, really bad/lazy quantizations).

A bunch of spam, drama, and abuse on Linkedin, Twitter, Reddit and such.

Basically, the devs are Tech Bros. They’re scammer-adjacent. I’ve been in local inference for years, and wouldn’t touch ollama if you paid me to. I’d trust Gemini API over them any day.

I’d recommend base llama.cpp or ik_llama.cpp or kobold.cpp, but if you must use an “turnkey” and popular UI, LMStudio is way better.

But the problem is, if you want a performant local LLM, nothing about local inference is really turnkey. It’s just too hardware sensitive, and moves too fast.

I’d be fine if these studios just…vanished

Outside mobile, it would honestly be a boon to gaming.

Think how much attention and funding they suck up from smaller studios/publishers making great games. Folks have no idea what they’re missing.

How would you prove to a cat that those colors exist?

A spectrum test.

Show a human red fading to infrared, or purple fading to ultraviolet, next to cameras that can detect them in false color. Those are colors we can’t see, yet you can see they’re there.

Theoretically, it’d be the same for a cat or dog.

Also, for any interested, desktop inference is basically my autistic interest.

I don’t like Gemma 4 much so far, but if you want to try it anyway:

On Nvidia with no CPU offloading, watch this PR and run it with TabbyAPI: https://github.com/turboderp-org/exllamav3/pull/185

With CPU offloading, watch this PR and the mainline llama.cpp issues they link. Once Gemma4 inference isn’t busted, run it in IK or mainline llama.cpp: https://github.com/ikawrakow/ik_llama.cpp/issues/1572

If you’re on an AMD APU, like a Mini PC server, look at: https://github.com/lemonade-sdk/lemonade

On an AMD or Intel GPU, either use llama.cpp or kobold.cpp with the vulkan backend.

Avoid ollama like it’s the plague.

Learn chat templating and play with it in mikupad before you use a “easy” frontend, so you understand what its doing internally (and know when/how it goes wrong): https://github.com/lmg-anon/mikupad

But TBH I’d point most people to Qwen 3.5/3.6 or Step 3.5 instead. They seem big, but being sparse MoEs, they can run quite quickly on single-GPU desktops: https://huggingface.co/models?other=ik_llama.cpp&sort=modified

There’s a whole lot of interest in locally runnable ML. It was there even before ChatGPT 3.5 started the tech bro hype train, when tinkerers were messing with GPT-J 6B and GAN models.

In a nutshell, it’s basically Lemmy vs Reddit. Local and community-developed vs toxic and corporate.

They seem to have held back the “big” locally runnable model.

It’s also kinda conservative/old, architecture wise: 16-bit weights, sliding window attention interleaved with global attention. No MTP, no QAT (yet), no tightly integrated vision, no hybrid mamba like Qwen/Deepseek, nothing weird like that. It’s especially glaring since we know Google is using an exotic architecture for Gemini, and has basically infinite resources for experimentation.

It also feels kinda “deep fried” like GPT-OSS to me, see: https://github.com/ikawrakow/ik_llama.cpp/issues/1572

it is acting crazy. it can’t do anything without the proper chat template, or it goes crazy.

IMO it’s not very interesting, especially with so many other models that run really well on desktops.

it’s a form of private journalism, private opinion, and private art

But without any of the liability hazard.

This is my issue: the big platforms having their cake and eating it. In one breath, they claim to be little open-platform garage startups that can’t possibly be responsible for the content of their users; they’re just a utility. They need protection from Congress. In another breath, they’re the stewards of generations and children, the only ones responsible enough to tame the internet’s criminality. All while making trillions.

They want to be “private content” protected from the government? Fine. Treat them like it, legally.

It is when it warps the behavior of everyone else around you, and everything in charge of your life.

And I’m not just talking about the lost attention. The algorithms are not neutral.

Yeah, that’s going too far, but I understand the reaction to fanning over Valve.

There are a bazillion historical examples of why one should use, not trust, big businesses. They are entities to make transaction with, not people, and they will tighten the screws even if it takes decades.

This is doubly true in the software business.

And if the Valve superfans look at the world in 2026 and somehow don’t see that, I honestly don’t know what to tell them. They’re in such a completely different world than me I don’t know where to start.

Be prepared.

Don’t hate, but don’t trust Valve. Treat your Steam library like you don’t own it, and it could be enshittified at any time, because you don’t, and it could.

In practice, prioritize DRM-free stores when convenient. Or better yet, 1st party game dev stores. Archive any games or saves you actually want to go back to, just in case. Game like your Steam client install could require a subscription at a moment’s notice.

Came off as abrasive, but your point stands.

Political support for the alt-right is booming across Europe. The last thing you European folks need to do is raise your nose at the American tire fire. Deal with your own, before its too late.