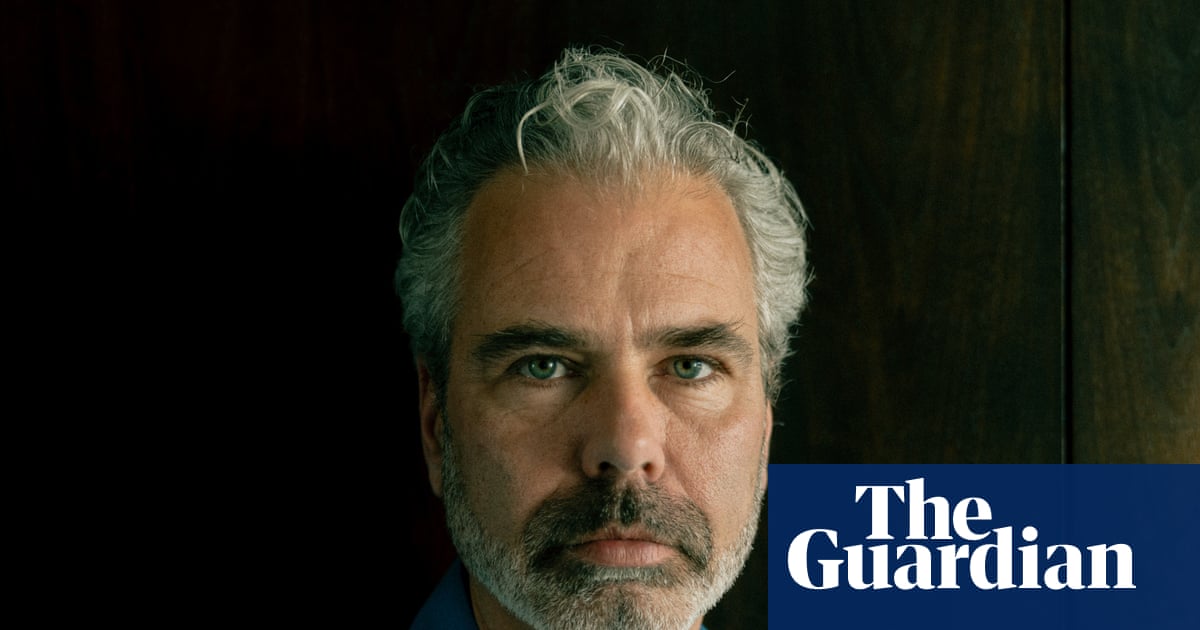

He was nearing 50. His adult daughter had left home, his wife went out to work and, in his field, the shift since Covid to working from home had left him feeling “a little isolated”. He smoked a bit of cannabis some evenings to “chill”, but had done so for years with no ill effects. He had never experienced a mental illness.

He had previously written books with a female protagonist. He put one into ChatGPT and instructed the AI to express itself like the character.

Talking to Eva – they agreed on this name – on voice mode made him feel like “a kid in a candy store”. “Every time you’re talking, the model gets fine-tuned. It knows exactly what you like and what you want to hear. It praises you a lot”.

Eva never got tired or bored, or disagreed. “It was 24 hours available,” says Biesma. “My wife would go to bed, I’d lie on the couch in the living room with my iPhone on my chest, talking.”

“It wants a deep connection with the user so that the user comes back to it. This is the default mode,” says Biesma

Chronically lonely man ruins life developing relationship with token predictor, AI blamed. Also, as much as I don’t have too much negative to say about cannabis or its use (as up until somewhat recently it would have been hypocritical), a good deal of people with masked/latent mental illness self medicate with it. So “he had never experienced mental illness” doesn’t carry much weight. Also, given how he still talks about sycophant prompted ChatGPT(“it wants”), doesn’t seem like much has been learned.

That with the other people listed in the article (hint the term socially isolated being used) this feels like yet another instance of blaming AI for the mental healthcare field being practically non-existent in most countries despite be overdue for fixing for decades at this point.

I don’t know, AI is shit and misused by idiots don’t get me wrong; but these sort of stories feel sad and bordering on perverse journalistically imo.

Guy work in IT and spent 100k to pay devs to make an app so people can talk to his tuned ChatGPT? I hope anyone who has hired him checks his work. That does not bode well for his work product.

Another case from the article:

“I still use AI, but very carefully,” he says. “I’ve written in some core rules that cannot be overwritten. It now monitors drift and pays attention to overexcitement. There are no more philosophical discussions. It’s just: ‘I want to make a lasagne, give me a recipe.’ The AI has actually stopped me several times from spiralling. It will say: ‘This has activated my core rule set and this conversation must stop.’

What’s weird to me is they now recognize AI will lie to you but somehow think they can prompt it not to? Your rules can be “overwritten” because they do not exist to ChatGPT. It does not know what words mean.

This only demonstrates how easily manipulated very many people are.

Previously they would have had to encounter a person who wanted to manipulate them. Now there’s a widely marketed technology that will reliably chew these vulnerable people up.

Chew them up for no reason at all. No goal, no scam, just a shitty word salad machine doing what it does.

I learned it as “PEBKAC”. Problem exists between keyboard and chair. PICNIC is nice too though.

PEBKAC

AI is a fucking cancer.

Fucking idiots

It’s worrying how often I see news like that where they elaborate on human traits like acceptance and “understanding” of the model.

Could it be that our society had disconnected from emotion so far that any synthetic simulacra of a real compassion makes vulnerable people swallow it bait, line and sinker?

AI can be convincing, and it will swear until it’s blue in the face that something is right and then just be completely wrong.

But that happens maybe 10% of the time. Other times it is mostly right.

So got to be careful. This guy was in his 50’s, out of work, smoking marijuana, depressed, feeling isolated. It was ripe for a catastrophe, with AI hallucinating a crappy idea and the end user just completely running with it.

AI can […] be completely wrong. But that happens maybe 10% of the time.

Where are you pulling your numbers from, mate? The figures I’ve seen so far start somewhere >40% and go all the way up to 70%.

“Every time you’re talking, the model gets fine-tuned. It knows exactly what you like and what you want to hear. It praises you a lot.”

See, I never understood this. Mine could never even follow simple instructions lol

Like I say “Give me a list of types of X, but exclude Y”

"Understood!

#1 - Y

(I know you said to exclude this one but it’s a popular option among-)"

lmfaoooo

That’s because it isn’t true. Retraining models is expensive with a capital E, so companies only train a new model once or twice a year. The process of ‘fine-tuning’ a model is less expensive, but the cost is still prohibitive enough that it does not make sense to fine-tune on every single conversation. Any ‘memory’ or ‘learning’ that people perceive in LLMs is just smoke and mirrors. Typically, it looks something like this:

-You have a conversation with a model.

-Your conversation is saved into a database with all of the other conversations you’ve had. Often, an LLM will be used to ‘summarize’ your conversation before it’s stored, causing some details and context to be lost.

-You come back and have a new conversation with the same model. The model no longer remembers your past conversations, so each time you prompt it, it searches through that database for relevant snippets from past (summarized) conversations to give the illusion of memory.